Machine moderators may be used in the pre-moderation stage to flag content for review by humans. This would increase moderation accuracy and improve the pre-moderation stage.

Machine Moderators in Content Management System Details Essentials for Iot Entrepreneurs

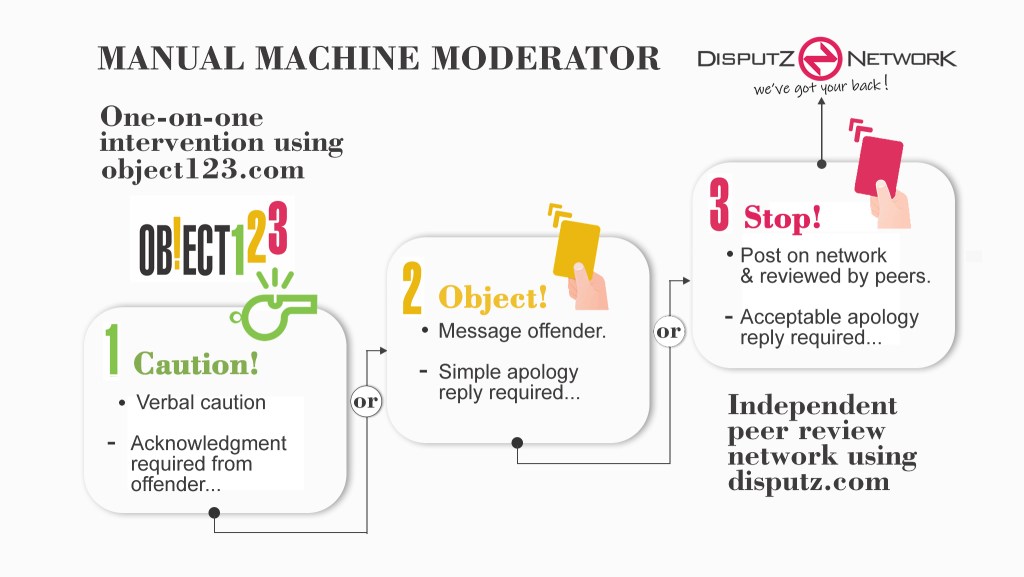

Object123 and the corresponding Disputz Network are the beginnings of a machine moderator for workplace teams. Similar to how Netflix started in the late 90s by manually mailing out DVDs while they were waiting for the tech to follow, we are preparing the ground for when AI and machine learning will be ubiquitous for automatically moderating our disagreements and disputes. Today, Netflix will still post your DVDs to you if you ask them, and like Netflix, this will be our twenty-year project.

At present, Object123 facilitates users to self-moderate their discussions by allowing them to object, in real-time, to any perceived infringement, misconduct, or poor behavior during a discussion. Object123 allows team members and team leaders to speak up by OBJECTING to any poorly delivered feedback on an idea, with an agreed-upon, three-step procedure.

E.g., “That will never work!” gets a Step 1. Caution for being overly dogmatic and use of absolute language such as “never”. The offender simply acknowledges their offense and rephrases their feedback. Eg.: “Fair call, how about ‘I don’t think it will work because’.”

However, if the offender wants to challenge the objection, it escalates to an officially messaged Step 2. OBJECTION, using the Disputz app. If they still challenge, then the dispute goes to the final Step 3. STOP, and it is automatically posted onto the Disputz Network to be independently reviewed by our peers to get a more objective viewpoint on their dispute.

The anonymous data, collected from steps 2 and 3 of the objection procedure, is collated, and used by the AI to assist in its learning. To be used as the technology continues to evolve.

Our hypothesis is that when team members understand that they are protected by a procedure and have their backs covered by an independent peer network and a benevolent AI, they will be more likely to share their more radical ideas, without the fear of receiving poorly delivered feedback.

Leave a comment