Feeling psychologically unsafe? What does it even mean, really? Okay, lets break it down.

Amy Edmondson coded description:

“A shared belief that the team is safe for interpersonal risk-taking.”

Amy Edmondson

A shared belief…

that the team is safe…

for interpersonal risk-taking.

=

=

=

Desmond Sherlock

We agree on…

a way to keep the team safe…

sharing conflicting ideas.

Here is my decoded explanation for psychological safety:

“We agree on a way to keep the team safe when sharing conflicting ideas.”

So, we need a way to protect new and fragile ideas when sharing. We need an agreed and safe way to stop people trying to shut us down when we are trying to speak up.

This is not rocket science, and yet everyone seems to have overcomplicated this whole thing, yes even Amy Edmondson, Tim Clark and Kim Scott and many more.

OUR SOLUTION

Here is my agile three-step solution to keep us safe when sharing conflicting ideas.

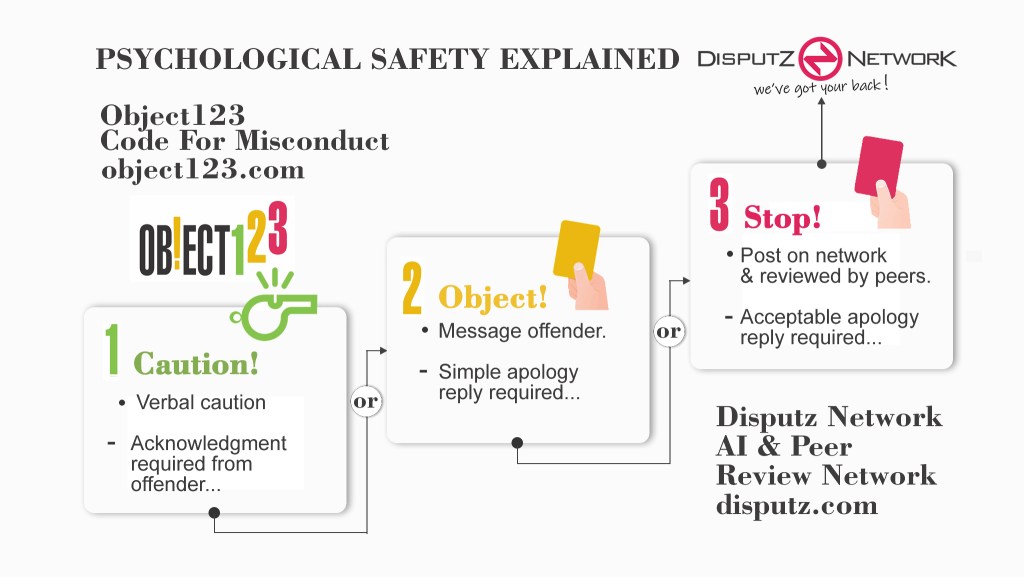

We simply agree to OBJECT to anyone’s behavior, in real-time and direct, when we are in a disagreement and someone tries to close us down. We also do it in three steps, of course, from a mild Caution to a full-on Stop. I call this the Object123 Code of Misconduct and it goes like this:

Object123 Code for Misconduct

- Caution the violator if one feels uncomfortable by their behavior or misconduct and receive an acknowledgment or if the caution is challenged then escalate to an….

- Object send the violator an official objection using the Disputz app and now receive a simple apology message in return or escalate to a…

- Stop and the dispute is posted automatically on the Disputz AI & Peer Review Network to be reviewed and voted on if necessary. A recommendation is then made to their team.

We also use our dispute AI and peer review network as a backup for when our code of misconduct does not resolve our dispute. What is most important about this code, I believe, is that it can be used for Machine Learning for our Disputz AI to also assist us to resolve our disputes in the future.

Leave a comment